EPM Automate At a Glance

EPM Automate enables Service Administrators to remotely perform tasks within Oracle Enterprise Performance Management Cloud environments such as PBCS, PCM, FCCS, ARCS and more. When combined with scripts, and a scheduler, a company an create automated programs that quickly perform jobs which are typically tedious, time consuming and prone to human error. This reduces cost dramatically and allows your leadership to allocate more time and resources to higher ROI projects

Lets say that every first Friday of the month, your perform tasks for multiple cubes, multiple files associated with the previous month's data, run business rules and prepare the cubes for Reporting. This periodic routine can take up the whole day, or even multiple days when errors are made. We can schedule the entire day's processes and tasks to run automatically. We can even make it faster, by telling the system when a new file is available for Upload

Updating Data

You can automate the the system to update with the latest Budget files, newest metadata or most recent Actuals. You can fetch the latest files based on different qualifiers such as when the file was last modified, or using a naming convention that extracts information from the file name using incremental numbers.

Intelligent Systems

We can design the system to derive the Category (Actual, Budget, Average, etc) by using variables. This creates an Intelligent System that performs data management and business rule processes for each category using the same script. A system should always be designed with respect to it's internal components and external environment. Total Quality Management emphasizes the benefits of reducing variance and bottlenecks from all of the participants in that environment. One potential bottleneck for a script would be having to fill in information such as which period to start on and which period to end on in a data load, or which Fiscal Year, Scenario and Version to use on an Aggregation Rule.

Data Management

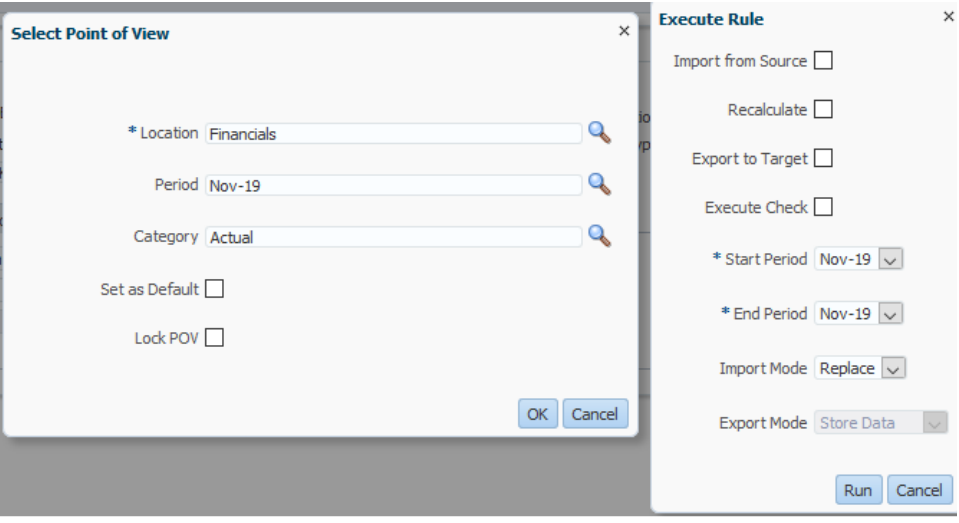

Let's say we want to load a file named Actuals12-02-19.txt. The Data management process will ask for Category, periods, file names, etc.

Simplified Interface

The Periods, Years and filenames will always change, but we can use variables to let the system update on its own. Here is an example of the same data load rule, except the Data Management job runs on schedule, and autonomously selects the proper parameters

Dynamic Programming

epmautomate rundatarule %Category% %prd%-%Yr:~2,2% %prd%-%Yr:~2,2% REPLACE STORE_DATA %ImportFileActuals%

EPM Automate Script that uses Dynamic Variables

without modifying the script, it can be reused for the January 2020 Averages file, or the Budget file which will be created 5 years from today, if you schedule the system to run that long in the background. That is the power of a dynamic system.

Logs and Reports

Even the best systems run into errors, so it is important to know when an error occurs, how to troubleshoot the error and how to keep an audit trail of the jobs, processes and status for reporting purposes.

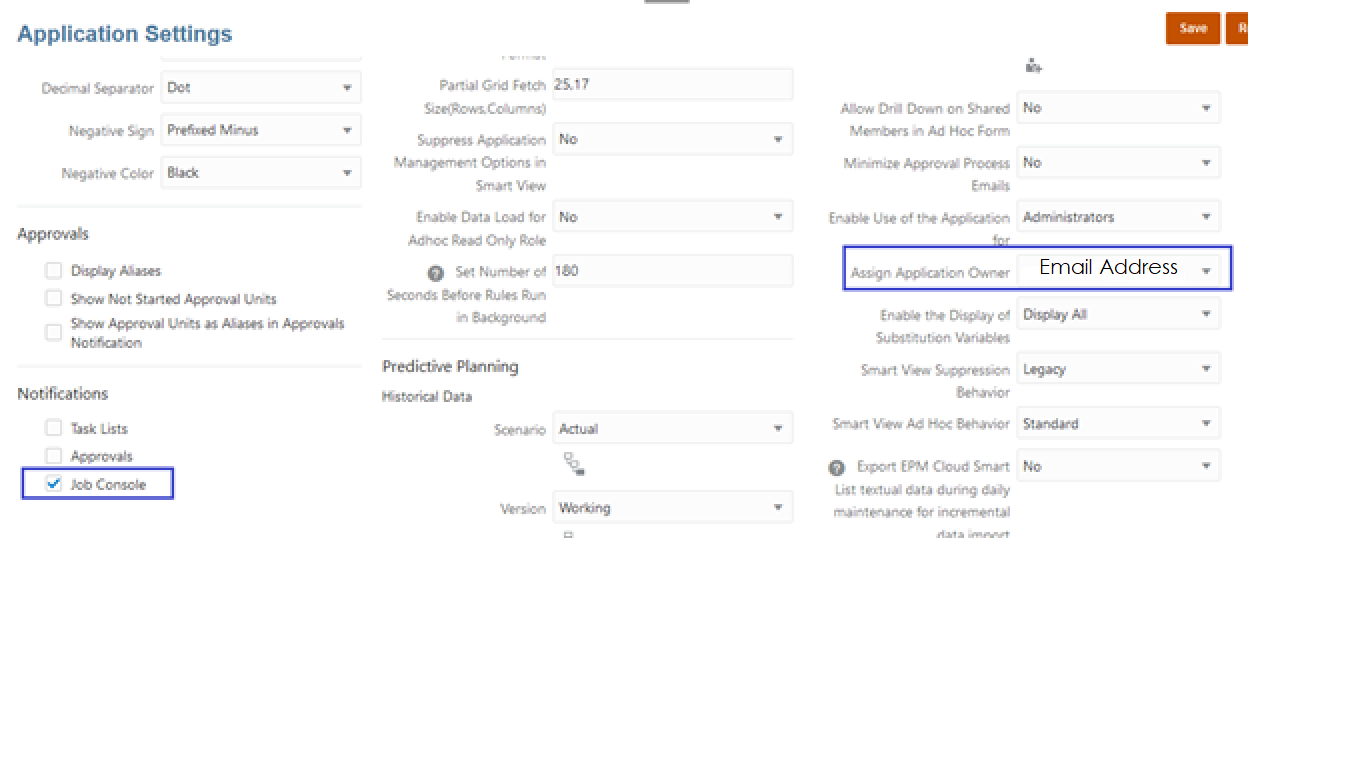

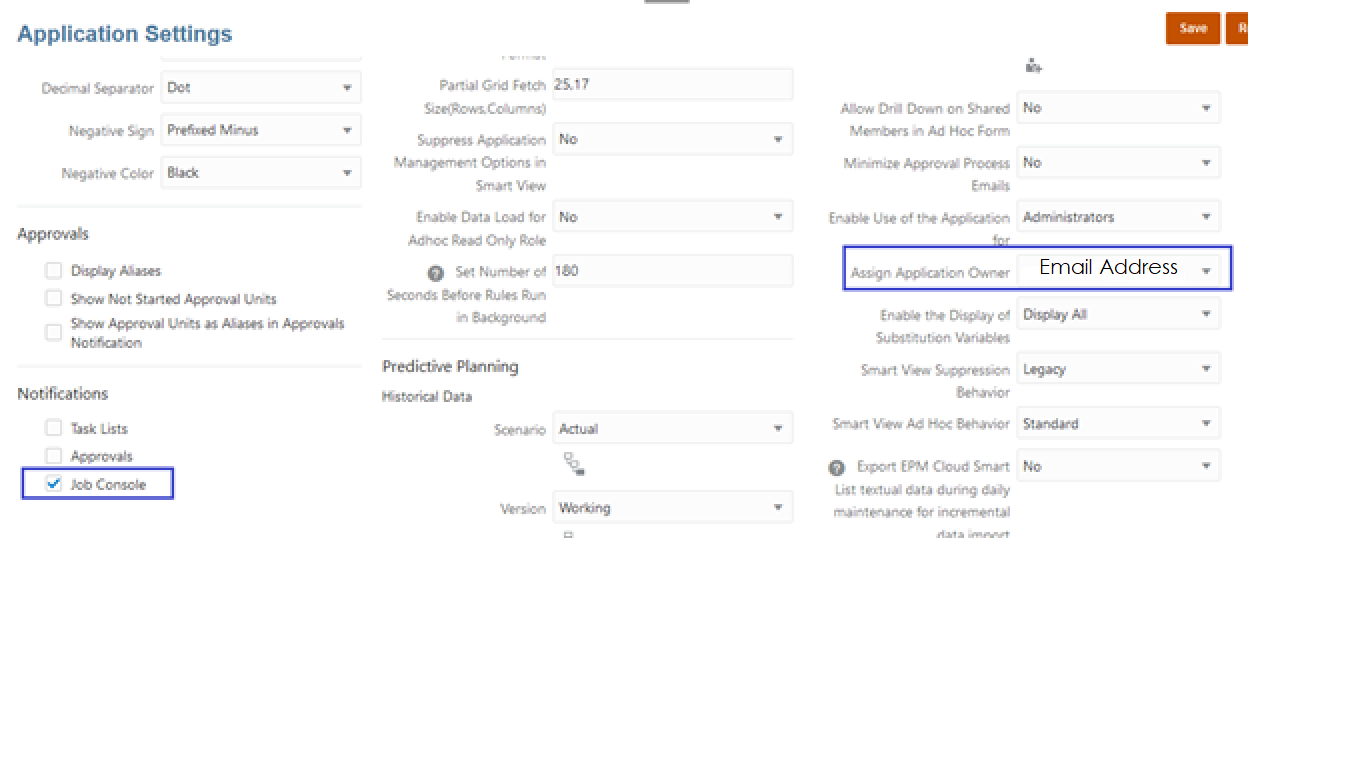

Email Notifications

In Application settings, select the Admin who will be notified via email notifications, and the type of notifications to send. If an error occurs, the Admin can address it immediately. If the automation runs smoothly, the admin can verify that everything is well with the status report.

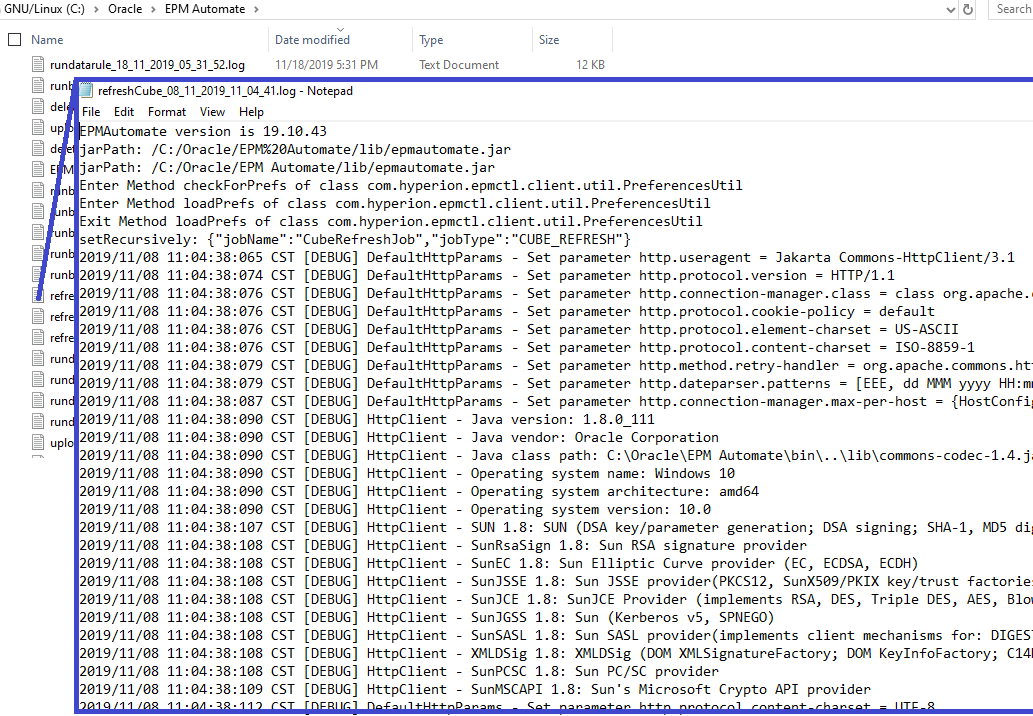

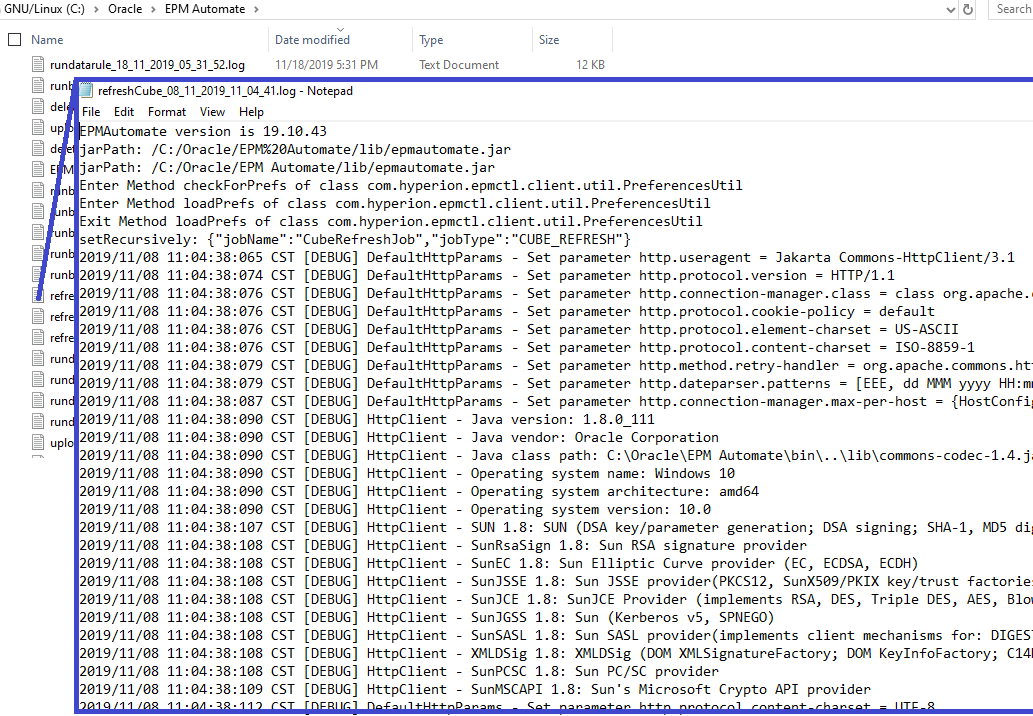

Error Logs

If you do get an error, you can access a local repository of Error Codes by date-time in which they occured without having to log into PBCS. This saves time, and provides a way to avoid any further complications when handled correctly by improving the system's design.

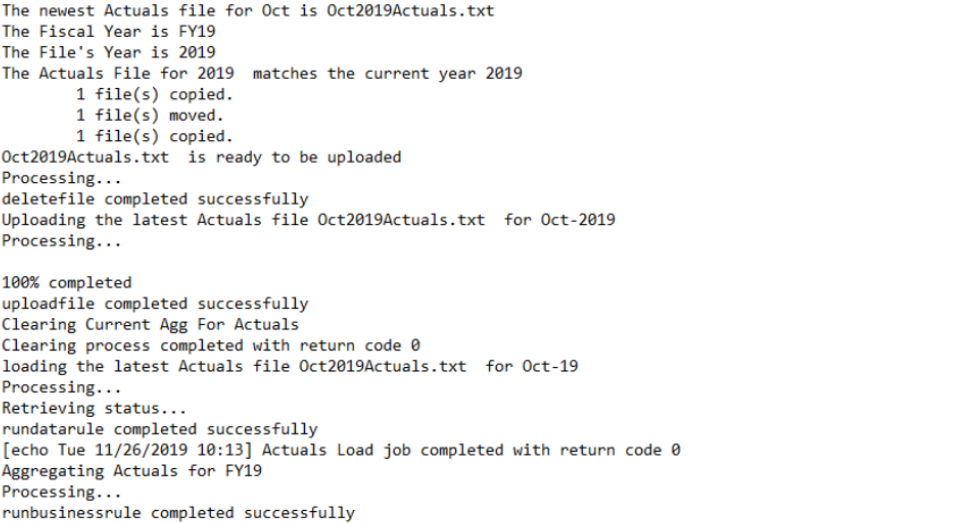

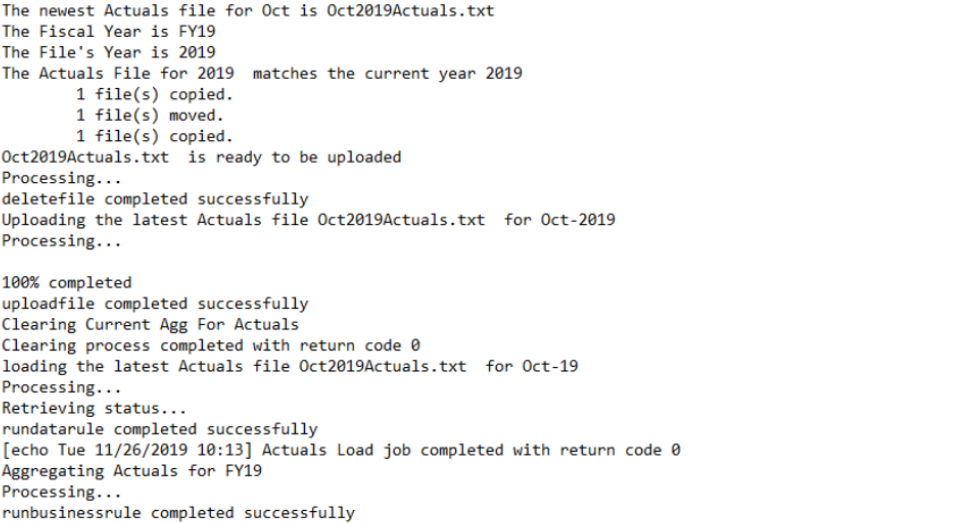

Historical Activity

You can keep records of each data load, metadata update, business rule, etc in a chronological text file. This allows you to audit a specific cube, or Category to keep track of what files were used, what rules were executed and the outcomes. You can even time stamp each process to compare the speed of automation vs human efforts